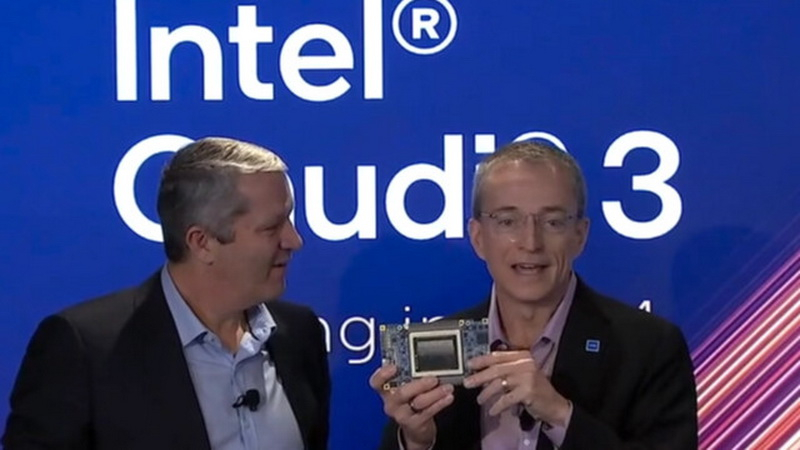

During the AI Everywhere event, Intel showcased its latest advancements in AI technology, including the consumer mobile processors Core Ultra and the 5th generation server chips Xeon Scalable. Intel CEO Pat Gelsinger also unveiled the upcoming AI computing accelerator, Gaudi3, set to launch in 2024. This new accelerator is poised to be a more affordable alternative to solutions from NVIDIA and AMD.

Gaudi3: A Sneak Peek

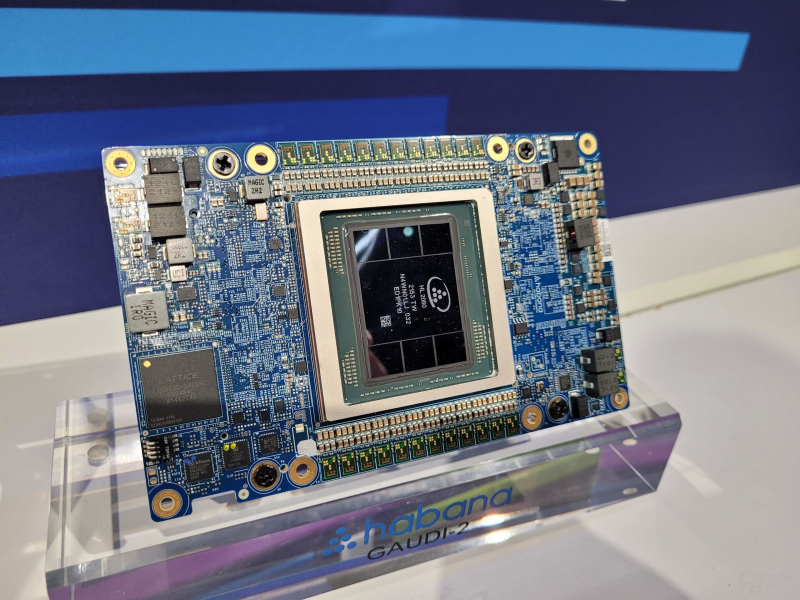

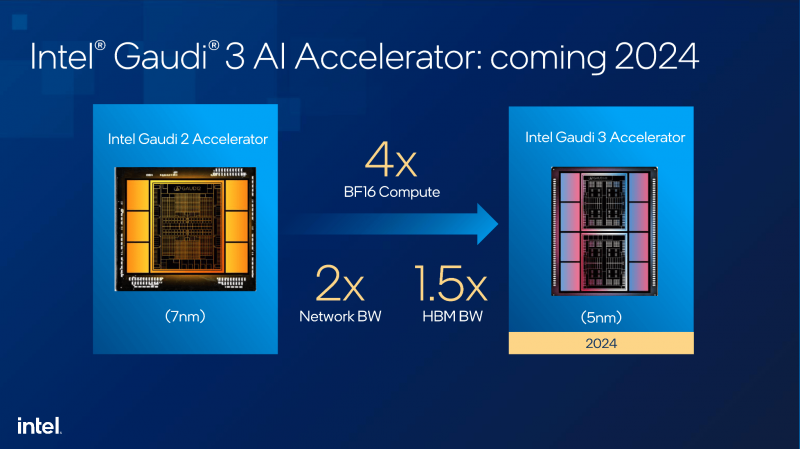

Gaudi3 is currently undergoing testing in Intel’s laboratories. Gelsinger presented a prototype consisting of a printed circuit board and a large graphics processor, surrounded by eight high-speed HBM3 memory modules. This is an upgrade from Gaudi2, which is equipped with six HBM3 memory chips. Gaudi3 is expected to be significantly more productive than its predecessor, with a power consumption similar or slightly higher than Gaudi2’s 600W.

Performance Comparison

Intel acknowledges that in terms of raw performance, Gaudi3 will not be able to compete with NVIDIA’s H100 accelerators, the upcoming H200 accelerators in the second half of 2024, and the subsequent Blackwell B100. However, with the constant growth of the AI computing segment, Intel believes that not all use cases require such large and power-hungry solutions as those offered by competitors.

Market Competition

Intel is confident in its ability to compete in the AI accelerator market by offering an attractive alternative with a favorable price-performance ratio. Gaudi2 has already carved out its niche in the market, as NVIDIA solutions are often sold out months in advance, and AMD’s MI200 series accelerators are produced in limited quantities. As a result, sales of Gaudi accelerators are rapidly increasing.

Gaudi3 Offerings

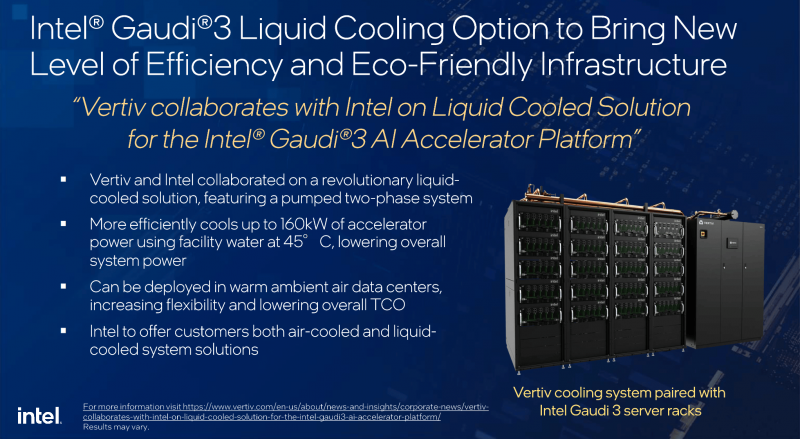

Gaudi3 accelerators will be available not only as standalone modules but also as part of ready-made, liquid-cooled instances. To achieve this, Intel is partnering with Vertiv, a company that provides critical solutions for digital infrastructure. Interestingly, NVIDIA has also been collaborating with Vertiv for a long time.